|

| HOME |

| BIO |

| PUBLICATIONS |

| arXiv Papers |

| G-Scholar Profile |

| SOFTWARE |

| CBLL |

| RESEARCH |

| TEACHING |

| WHAT's NEW |

| DjVu |

| LENET |

| MNIST OCR DATA |

| NORB DATASET |

| MUSIC |

| PHOTOS |

| HOBBIES |

| FUN STUFF |

| LINKS |

| CILVR |

| CDS |

| CS Dept |

| Courant |

| NYU |

| Websites that I maintain |

|

|

|

|

|

Yann LeCun,

Executive Chairman, Advanced Machine Intelligence (AMI Labs)

Jacob T. Schwartz Professor of Computer Science, Data Science, Neural Science, and Electrical and Computer Engineering,

Courant Institute - School of Mathematics, Computing, and Data Science,

New York University.

ACM Turing Award Laureate, (sounds like I'm bragging, but a condition of accepting the award is to write this next to your name)

Member, National Academy of Engineering, National Academy of Sciences, Académie des Sciences

Fellow, ACM, AAAI, AAAS, SIF

last updated: 2025-05-26

| Social Networks |

Threads/Fediverse: @yannlecun (ML/AI, announcements, photos, politics)

LinkedIn: yann-lecun (ML/AI research and industry, announcements)

Facebook: yann.lecun (general, science, ML/AI, culture, hobbies, photos)

BlueSky: @yann-lecun.bsky.social (Not using it much)

Twitter/X: @ylecun (I no longer write posts on X)

Note: X has devolved into an antagonistic propaganda tool. As of

December 2024, I no longer write posts on X. As a favor to my numerous

followers, I tweet links to posts on other platforms (occasionally), I

retweet interesting contents from others (sometimes), and I comment on

tweets by friends (rarely). But I don't write substantial content.

| Biography / CV |

bios of various lengths in English and en francais

| Contact Information |

NYU Affiliations:

CILVR Lab (Computational Intelligence, Learning, Vision, Robotics)

Computer Science Department

Center for Data Science, NYU

Courant Institute - School of Mathematics, Computing, and Data Science

Center for Neural Science, Faculty of Arts and Sciences

Department of Electrical and Computer Engineering, NYU Tandon School of Engineering

New York University

Assistants

Executive Assistant - AMI Labs: Sean Nguyen: sean[at]amilabs.xyz

Administrative Aide - NYU: Hong Tam +1-212-998-3374 hongtam[at]cs.nyu.edu

FOR INVITATIONS TO SPEAK: please send email to lecuninvites[at]gmail.com

(I really can't handle invitations sent to other email addresses)

IF YOU REALLY NEED ME TO DO SOMETHING FOR YOU: (e.g. a review, a letter...) please send email to Sean Nguyen sean[at]amilabs.xyz

NYU coordinates:

Address: Room 516, 60 Fifth Avenue, New York, NY 10011, USA.

Email: yann.lecun[at]nyu.edu (I may not respond right away)

Phone: +1-212-998-3283 (I am very unlikely to respond or listen to voice mail in a timely manner)

AMI Labs Coordinates:

Email: yann[at]amilabs.xyz (I may not respond right away)

| Publications, Talks, Courses, Videos |

Main Research Interests:

AI, Machine Learning, Computer Vision,

Robotics, and Computational Neuroscience. I am also interested

Physics of Computation, and many applications of machine learning.

Publications:

Google Scholar

Papers on OpenReview.net

Preprints on ArXiv

Publications up to 2014 with PDF and DjVu

Talks / Slide Decks:

Slides of (most of) my talks

Deep Learning Course:

Deep Learning course at NYU:

Complete course on Deep Learning, with all the material available on line including lectures and practicums, videos, slide decks, homeworks, Jupyter notebooks, and transcripts in several languages.

Videos: Playlists on YouTube:

- Talks by Yann LeCun

- Lectures Series by Yann LeCun

- Debates and Panels with Yann LeCun

- Interviews of Yann LeCun

- Demos by Yann LeCun

- Six short videos to explain AI, Machine Learning, Deep Learning and Convolutional Nets (2016)

| Working Paper |

A Path Towards Autonomous Machine Intelligence

(June 2022)How could machines learn as efficiently as humans and animals? How could machines learn to reason and plan? How could machines learn representations of percepts and action plans at multiple levels of abstraction, enabling them to reason, predict, and plan at multiple time horizons? This position paper proposes an architecture and training paradigms with which to construct autonomous intelligent agents. It combines concepts such as configurable predictive world model, behavior driven through intrinsic motivation, and hierarchical joint embedding architectures trained with self-supervised learning.

Recent lectures on the topic:

- 2025-09-16: Self-Supervised Learning, JEA, World Models, and the Future of AI¨

Harvard Center for Mathematical Sciences and Applications Video on YouTube

- 2025-04-27: "Shaping the Future of AI"

Distinguished Lecture at National University of Singapore University.

Video on YouTube

Slide deck - 2024-10-18: "How could machines reach human-level intelligence?"

Distinguished Lecture at Columbia University

Video on Youtube

Slide deck

| Books |

Quand La Machine Apprend

La revolution des neurones artificiels et de l'apprentissage profond (Editions Odile Jacob, Octobre 2019)Exists in Chinese, Japanese, and Russian.

La Plus Belle Histoire de l'Intelligence

Des origines aux neurones artificiels : vers une nouvelle étape de l'évolutionStanislas Dehaene, Yann Le Cun, Jacques Girardon (Éditions Robert Laffont, Octobre 2018)

| Pamphlets and opinions |

How to Build a Vibrant Technology Industry (by attracting scientists to your country) (2025-05-30)

As the US seems set on self-sabotaging its extraordinarily successful system of public research funding, countries in Europe and elsewhere may want to seize the opportunity to reboot their own research ecosystem and jumpstart their technology industry.

Five Ways to Act Deluded, Stupid, Ineffective, or Evil (2025-04-28)

Using ideas from AI research to explain the failure modes of human behavior, with examples from international trade policy.Comments on LinkedIn, Threads, Facebook

AI and the Future of Europe, a Defining Moment (2025-02-09)

by Bernhard Schölkopf, Nuria Oliver, and Yann LeCun.In which we argue that funding from the newly-formed European AI Research Council should go to small groups of talented researchers and not be administered as a large project managed from the top down.

This opinion piece was published in January 2025 simultaneously in Les Échos, Handelsbaltt, and El Pais.

Address to the UN Security Council (2024-12-19)

I was invited by Secretary of State Antony Blinken to speak about AI at the UN Security Council meeting on 2024-12-19. I was followed by Fei-Fei Li, and representatives from UNSC member states.

In my speech, I argued for free/open source foundation models and for international cooperation to train "universal" foundation models that speak all the languages in the world and understand all cultures and value systems.

My speech starts at the 00:12:20 mark in this video

Proposal for a new publishing model in Computer Science (2009-12-01)

Many computer Science researchers are complaining that our emphasis on highly selective conference publications, and our double-blind reviewing system stifles innovation and slow the rate of progress of Science and technology.

This pamphlet proposes a new publishing model based on an open repository and open (but anonymous) reviews which creates a "market" between papers and reviewing entities.

| Students and Postdocs |

Current PhD Students

- Ying Wang (NYU CS with Mengye Ren) [SSL for control and planning]

- Yilun Kuang (NYU CS) [SSL for control and planning]

- Basile Terver (FAIR-ENS with Jean Ponce) [SSL for control and planning]

- Gaoyue ''Kathy'' Zhou (NYU CS with Lerrel Pinto) [SSL for control and planning]

- Wangcong ''Kevin'' Zhang (NYU CS) [SSL for planning]

- Megi Dervishi (FAIR-Université Paris-Dauphine with Alexandre Allauzen) [SSL for text]

Current Postdocs

- Théo Bodrito (Meta-FAIR, 2025- ) [SSL]

- Ziyu Wang (NYU, 2025- ) [SSL and music]

- Quentin Le Lidec (NYU, 2025-) [Planning and Control]

- Oumayma Bounou (NYU, 2025-) [Planning and Control]

- Gelareh Naseri (NYU, 2021-) [music synthesis and composition]

Former PhD Students

- Shengbang ''Peter'' Tong (2026 NYU CS with Saining Xie) [SSL for video]. AMI Labs.

- Jiachen Zhu (2025 NYU CS) [SSL and optimization], Skild AI.

- Vlad Sobal (2025, NYU CDS) [SSL for planning and control], Amazon

- Quentin Garrido (2025, FAIR-Université Gustave Eiffel with Laurent Najman) [SSL for images and video] FAIR-Paris.

- Alexander Rives (2024 NYU CS) [Protein design] FAIR, CEO Evolutionary Scale, MIT EECS & Broad Institute, Head of Science CZI.

- Katrina Drozdov Evtimova (2024 NYU CDS) [latent variable JEPA]

- Adrien Bardes (2024 FAIR-INRIA with Jean Ponce) [SSL, VICReg, I-JEPA, V-JEPA]. FAIR

- Zeming Lin (2023 NYU CS) [Transformers for protein structure]. FAIR, EvolutionaryScale AI

- Aishwarya Kamath (2023 NYU CDS) [vision-language models] DeepMind

- Junbo ``Jake'' Zhao (2019 NYU CS) [energy-based models] faculty Zhejiang University

- Xiang Zhang (2018 NYU CS) [deep learning for NLP] Element AI, Google AI, startup

- Mikael Henaff (2018 NYU CS) [deep learning for control] Microsoft Research, FAIR

- Remi Denton (2018 NYU CS, with Rob Fergus) [video prediction] Google

- Sainbayar Sukhbaatar (2018, NYU CS with Rob Fergus) [memory, intrinsic motivation, multiagent communication] FAIR

- Michael Mathieu (2017 NYU CS) [DL for video prediction and image understanding] DeepMind

- Jure Zbontar (2016 U. of Ljubljana, co-advised) [DL for stereo vision] NYU, FAIR, Tesorai, OpenAI

- Sixin Zhang (2016 NYU CS) [paralellized deep learning] ENS-Paris, faculty Institut National Polytechnique de Toulouse

- Wojciech Zaremba (2016 NYU CS with Rob Fergus) [algorithm synthesis] OpenAI

- Rotislav Goroshin (2015 NYU CS) [unsupervised representation learning] DeepMind

- Pierre Sermanet (2014 NYU CS) [DL for vision and mobile robot perception] Google Brain, DeepMind

- Clément Farabet (2014 U. Gustave Eiffel with Laurent Najman) [dedicated hardware for ConvNets, vision, Torch-7] Twitter, Nvidia, VP of Research DeepMind

- Fu Jie Huang (2013 NYU CS) [DL for vision] Milabra, Kanerai

- Kevin Jarrett (2012 NYU Neural Science) [DL models of biological vision] Bridgewater,...,Barclays

- Matthew Grimes (2012 NYU) [SLAM] Cambridge, DeepMind

- Y-Lan Boureau (2012, NYU-INRIA with Jean Ponce) [sparse feature learning for vision] Flatiron Institute, FAIR, CEO ThrivePal)

- Koray Kavukcuoglu (2010, NYU) [sparse auto-encoders for unsupervised feature learning] NEC Labs, VP of GenAI DeepMind, CTO DeepMind, Chief AI Architect Google.

- Piotr Mirowski (2010 NYU) Bell Labs, Microsoft, DeepMind

- Ayse Naz Erkan (2010 NYU, with Yasemine Altun) Twitter, Robinhood, CEO Laminar AI.

- Marc'Aurelio Ranzato (2009 NYU) Google X-Labs, FAIR, DeepMind.

- Sumit Chopra (2008 NYU) AT&T Labs-Research, FAIR, Imagen, faculty NYU.

- Raia Hadsell (2008 NYU) SRI, VP Foundations DeepMind

- Feng Ning (2006 NYU) Bank of America, Société Générale, ScotiaBank, AQR Capital, VP AllianceBernstein.

Former Postdocs

- Ravid Schwartz-Ziv (NYU, 2023-2025) Meta-FAIR

- Amir Bar (FAIR, 2024-2025), Meta-FAIR, Imperial College & AMI Labs

- Micah Goldblum (NYU 2021-2024), Columbia University

- Grégoire Mialon (FAIR 2021-2023), Meta-MSL

- Randall Balestriero (FAIR 2021-2023), Brown University & AMI Labs

- Nicolas Carion (NYU 2020-2022), Meta-FAIR

- Yubei Chen (FAIR 2020-2022), UC Davis

- Li Jing (FAIR 2019-2021), OpenAI, AMI Labs

- Jacob Browning (NYU 2019-2023): philosophy and history of AI (Berggruen Transformation of the Human program)

- Phillip Schmitt (NYU 2019-2021): AI and the visual arts (Berggruen Transformation of the Human program)

- Stéphane Deny (FAIR 2019-2021), U of Aalto

- Alfredo Canziani (NYU 2017-2022), NYU: autonomous driving, AI education

- Behnam Neyshabur (NYU 20172019), Google, DeepMind/BlueShift, Anthropic, stealth startup

- Jure Zbontar (NYU 2016-2017). FAIR, OpenAI: temporal prediction

- Anna Choromanska (NYU 2014-2017) NYU Tandon: applied mathematics

- Pablo Sprechmann (NYU 2014-2017), DeepMind: applied mathematics and signal processing

- Joan Bruna (NYU 2012-2014), FAIR, UC Berkeley, NYU: applied mathematics

- Camille Couprie (NYU 2011-2013), FAIR: computer vision

- Tom Schaul (NYU 2011-2013), DeepMind: machine learning and optimization

- Jason Rolfe (NYU 2011-2013), D-Wave, Variational AI: computational neuroscience

- Leo Zhu (NYU 2010-2011), CEO Yitu: hierarchical vision models.

- Arthur Szlam (NYU 2009-2011), CUNY, FAIR, DeepMind: applied mathematics.

- Karol Gregor (NYU 2008-2011), Janelia Farm, DeepMind: machine learning.

- Trivikraman Thampy (NYU 2008-2009), CEO Play Games24x7: financial modeling and prediction.

- Joseph Turian (NYU 2007-2007), Founder MetaOptimize: energy-based models.

- Margarita Osadchy (NEC Labs 2002-2003), University of Haifa: energy-based models, face detection with ConvNets.

- Yoshua Bengio (AT&T Bell Labs 1992-1993), MILA - Université de Montréal.

- Patrice Simard (AT&T Bell Labs 1991-1992), AT&T Labs, Microsoft Research.

| Bragging Zone |

Honors and Awards

- Genius Award 14, Liberty Science Center - New Jersey, 2026 [link]

- Inaugural Trailblazer Award, the New York Academy of Sciences, 2025, [link]

- Queen Elizabeth Prize for Engineering, 2025 (shared with Yoshua Bengio, Geoffrey Hinton, John Hopfield (Foundations), Bill Dally, Jensen Huang (Hardware), Fei-Fei Li (Data). [link]

- AMS Josiah Willard Gibbs Lecturer, JMM Seattle, 2025, [link]

- VinFuture Grand Prize, 2024 (shared with Yoshua Bengio, Geoff Hinton, Jensen Huang, Fei-Fei Li), [link], [acceptance speech], [pictures]

- Trailblazer in Science Award, New York Hall of Science, 2024, [link]

- Doctorate Honoris Causa, Université de Genève, 2024, [link], [lecture]

- Professor Honoris Causa, ESIEE / Université Gustave Eiffel, 2024, [link]

- Lifetime Honorary Membership, New York Academy of Sciences, 2024, [link]

- Fellow Association for Computing Machinery, 2024, [link]

- Great Immigrant, Carnegie Corporation of New York, 2024, [link]

- TIME 100 Impact Award, 2024, [link], [pictures]

- Membre d'Honneur, Société Informatique de France, 2024, [link]

- Chevalier de la Légion d'Honneur, France, 2020/2023, [link], [pictures]

- Global Swiss AI Award for outstanding global impact in the field of artificial intelligence, 2023, [link], [pictures]

- Inaugural Professorship, Jacob T. Schwartz Chair in Computer Science, Courant Institute, NYU. 2023, [link]

- Doctorate Honoris Causa, Hong Kong University of Science and Technology, 2023, [link], [pictures]

- Doctorate Honoris Causa, Università di Siena, 2023, [link], [pictures]

- International Association of Engineers Laureate, 2023, [link]

- Princess of Asturias Award, for Technical and Scientific Research (with Demis Hassabis, Yoshua Bengio, and Geoffrey Hinton), 2022, [link], [pictures]

- Foreign Member, Académie des Sciences, France, 2022, [link], [video at 00:53:58]

- Fellow, American Association for the Advancement of Science, 2021, [link]

- Member, US National Academy of Sciences, 2021, [link], [pictures]

- Doctorate Honoris Causa, Université Côte d'Azur, 2021, [link]

- Fellow, Association for the Advancement of Artificial Intelligence, 2020, [link]

- Golden Plate Award, International Academy of Achievement, 2019, [link]

- ACM A.M. Turing Award, 2018 (shared with Geoffrey Hinton and Yoshua Bengio), [link], [pictures]

- Doctorate Honoris Causa, Ecole Polytechnique Fédérale de Lausanne, 2018, [link]

- Holst Medal, Technical University of Eindhoven and Philips Labs, The Netherlands

- Pender Award, University of Pennsylvania, 2018, [link]

- Member, US National Academy of Engineering, Class of 2017, [link]

- Nokia-Bell Labs Shannon Luminary Award, 2017, [interview] [lecture]

- Annual Chair in Computer Science, Collège de France 2015-2016. [link]

- Lovie Lifetime Achievement Award, International Academy of Digital Arts and Sciences, 2016. [link to acceptance speech]

- Inductee, New Jersey Inventor Hall of Fame, 2016. [link]

- Doctorate Honoris Causa, Instituto Politécnico Nacional, Mexico, 2016. [link]

- IEEE Pattern Analysis and Machine Intelligence Distinguished Researcher Award, 2015. [link]

- IEEE Neural Network Pioneer Award, 2014. [link]

- NYU Silver Professorship, 2008.

- Fyssen Foundation Fellowship, 1987.

| In the Media: podcasts, interviews, press articles |

Podcasts in English:

- Unsupervised Learning: With Jacob Effron, 05/2026 YouTube "Yann LeCun on What Comes After LLMs"

- Welch Labs podcast, 04/2026 YouTube "Yann LeCun's $1B Bet Against LLMs "

- Machine Learning: How Did We Get Here? Episode 3, with Tom Mitchell, 03/2026 YouTube "A University and Corporate Perspective with Yann LeCun"

- How I Doctor with Dr. Graham Walker, 02/2026 YouTube "Move Over LLMs! Yann LeCun & Alex LeBrun Debut AMI Labs’ World Models for Healthcare"

- India AI Impact Summit, Fireside chat with Marya Shakil, India, 01/2026 YouTube "AI Godfather LeCun Talks on Why AI Must Work With Humans, Not Replace Them"

- Davos/WEF: Imagination in Action with John Werner, 01/2026 YouTube "Why LLMs Will Not Lead to AGI"

- The Information Bottleneck, 12/2025 Podcast "Yann LeCun – Why LLMs Will Never Get Us to AGI"

- AI Alliance fireside chat, 05/2025 YouTube " Yann LeCun (Meta) with Anthony Annunziata (IBM)"

- U Penn Innovation and Impact Podcast with Vijay Kumar, Episode 7, 04/2025 YouTube "The Future of AI with Yann LeCun"

- AI Inside Podcast with Jeff Jarvis and Jason Howell, 04/2025 YouTube "Human Intelligence is not General Intelligence"

- Newsweek AI Impact series, 04/2025 Newsweek video "an interview with Marcus Weldon and Gabriel Snyder"

- Nvidia GTC, 03/2025 YouTube "Frontiers of AI and Computing: A Conversation with Yann LeCun and Bill Dally"

- This is World with Matt Kawecki, 03/2025 YouTube "AI Needs Physics to Evolve"

- Big Technology Podcast with Alex Kantrowitz, 03/2025 YouTube "Why Can't AI Make Its Own Discoveries? — With Yann LeCun"

- The Economist Babbage 02/2025 The Economist "Machine-learning pioneer Yann LeCun on why “a new revolution in AI” is coming"

- IEEE TEMS podcast with Stephen Ibaraki, 02/2025 YouTube "Podcast of the IEEE Technology and Enginieering Management Society"

- Imagination In Action with John Werner, 02/2025 YouTube "Yann LeCun & John Werner on The Next AI Revolution: Open Source & Risks | IIA Davos 2025"

- Johns Hopkins - Bloomberg Center Discovery Series with Kara Swisher 01/2025 YouTube "Kara Swisher and Meta's Yann LeCun Interview - Hopkins Bloomberg Center Discovery Series"

- Nikhil Kamath, 11/2024 YouTube "WTF is Artificial Intelligence Really? | People by WTF Ep #4" history of AI, how deep learning works, LLMs, JEPA, the future of AI...

- Lex Friedman #416, 03/2024 YouTube "Meta AI, Open Source, Limits of LLMs, AGI & the Future of AI"

- CBS Mornings, 12/2023 YouTube "Interviews of Yann LeCun Meta's Chief AI Scientist Yann LeCun talks about the future of artificial intelligence"

- Twenty Minute VC with Harry Stebbing, 05/2023 Podcast "Yann LeCun on Why Artificial Intelligence Will Not Dominate Humanity..."

- With Andrew Ng, 04/2023 YouTube "Yann LeCun and Andrew Ng: Why the 6-month AI Pause is a Bad Idea"

- Big Technology Podcast with Alex Kantrowitz, 01/2023 YouTube "Is ChatGPT A Step Toward Human-Level AI?"

- Boz to the Future with Andrew Bosworth, 08/2022 Apple Podcasts

- Eye on AI with Craig Smith #150 podcast "World Models, AI Threats and Open Sourcing"

- Lex Friedman #258, 01/2022 YouTube "Dark Matter of Intelligence and Self-Supervised Learning"

- Big Technology Podcast with Alex Kantrowitz, 12/2021 YouTube "Daniel Kahneman and Yann LeCun: How To Get AI To Think Like Humans"

- The Robot Brains Podcast with Pieter Abbeel, 09/2021 YouTube "Yann LeCun explains why Facebook would crumble without AI"

- The Gradient Podcast, 08/2021 The Gradient "Yann LeCun on his Start in Research and Self-Supervised Learning"

- TED with Chris Anderson, 06/2020 Video "Deep learning, neural networks and the future of AI"

- Lex Friedman #36, 08/2019 YouTube "Deep Learning, ConvNets, and Self-Supervised Learning"

- Eye on AI with Craig Smith #114 podcast "Filling the gap in LLMs"

- Eye on AI with Craig Smith #017, 06/2019 video,podcast

- DeepLearning.ai with Andrew Ng, 04/2018 YouTube, "Heroes of Deep Learning: Yann LeCun"

Podcasts en français:

- La Matinale de France Inter, L'invité de Benjamin Duhamel 03/2026 podcast, YouTube "AMI propose 'la prochaine révolution de l'IA, qui comprend le monde réel', déclare Yann Le Cun "

- Generation DIY #397 avec Matthieu Stefani 06/2024 podcast "L’Intelligence Artificielle Générale ne viendra pas de Chat GPT"

- Monde Numérique avec Jérôme Colombain, 04/2024 YouTube "IA : nous aurons tous des assistants intelligents... dans dix ans"

- Toutes mes interviews sur France Inter playlist

- Interview sur Europe1 06/2023 podcast "Yann LeCun : «L'intelligence artificielle va amplifier l'intelligence humaine»"

- Interview sur France Culture 10/2018 podcast sur YouTube "Yann LeCun : Les émotions sont inséparables de l'intelligence"

Press interviews and articles

- Yann LeCun’s AMI Labs raises $1.03B to build world models (Anna Heim, Techcrunch, 03/2026)

- Yann LeCun On Artificial General Intelligence And The Digital Commons (John Werner, Forbes, 01/2026)

- An A.I. Pioneer Warns the Tech ‘Herd’ Is Marching Into a Dead End (Cade Metz, New York Times, 01/2026)

- Yann LeCun’s new venture is a contrarian bet against large language models (Caiwei Chen, MIT Technology Review, 01/2026)

- Who’s behind AMI Labs, Yann LeCun’s ‘world model’ startup (Anna Heim, TechCrunch, 01/2026)

- Elon Musk, Trump, Meta et l’avenir de l’IA : Yann Le Cun, le génie français qui vaut 3 milliards, parle cash (Guillaume Grallet, Le point, 01/2026)

- Computer scientist Yann LeCun: ‘Intelligence really is about learning' (Melissa Heikkilä, Financial Times, 01/2026)

- AI whiz Yann LeCun is already targeting a $3.5 billion valuation for his new startup—and it hasn’t even launched yet (Dave Smith, Fortune, 12/2025)

- He’s Been Right About AI for 40 Years. Now He Thinks Everyone Is Wrong (Meghan Bobrowsky, Wall Street Journal, 11/2025)

- Why an AI 'godfather' is quitting Meta after 12 years (Liv McMahon, BBC, 11/2025)

- Yann LeCun, a Pioneering A.I. Scientist, Leaves Meta (Eli Tan, New York Times, 11/2025)

- Meta chief AI scientist Yann LeCun plans to exit and launch own start-up (Financial Times, 11/2025)

- AI ‘Godfather’ Yann LeCun: LLMs Are Nearing the End, but Better AI Is Coming (Marcus Weldon, Newsweek, 09/2025)

- Meta chief AI scientist Yann LeCun says current AI models lack 4 key human traits (Business Insider, 05/2025)

- Meta AI chief: ‘Inferiority complex’ is stunting European tech (Siôn Geschwindt, The Next Web, 05/2025)

- Yann LeCun, Pioneer of AI, Thinks Today's LLM's Are Nearly Obsolete (Gabriel Snyder, Newsweek, 04/2025)

- Driving Toward The Future: Yann LeCun Opines On The Shape Of AI (John Werner, Forbes, 04/2025)

- Well, it looks like Meta's Yann LeCun may have been right about AI - again (The Decoder, 02/2025)

- More Than One AI Revolution? Yann LeCun On Tech Trajectories (John Werner, Forbes, 02/2025)

- Yann Le Cun, vigie internationale de l'IA (Marine Protais, La Tribune, 02/2025)

- Yann LeCun : «L’AGI dans quelques années ? C’est simplement faux !» (Thierry Derouet, IT for Business, 02/2025)

- IA : Yann Le Cun au micro de BFM Business (BFM TV Business, 02/2025)

- Sommet de l’IA : Meta dévoile sa vision de l’avenir de l’intelligence artificielle (bye-bye les robots conversationnels) (Mathilde Cousin, 20minutes.fr, 02/2025)

- AI ‘godfather’ predicts another revolution in the tech in next five years (Dan Milmo, The Guardian, 02/2025)

- Machine-learning pioneer Yann LeCun on why “a new revolution in AI” is coming (The Economist, 02/2025)

- Meta Engineers See Vindication in DeepSeek’s Apparent Breakthrough (Cade Metz and Mike Isaac, New York Times, 01/2025)

- ChatGPT, DeepSeek, Or Llama? Meta’s LeCun Says Open-Source Is The Key (Luis Romero, Forbes, 01/2025)

- Meta's chief AI scientist says market reaction to DeepSeek was 'woefully unjustified.' Here's why (Business Insider, 01/2025)

- Meta’s Yann LeCun predicts ‘new paradigm of AI architectures’ within 5 years and ‘decade of robotics’ (Paul Sawers, Tech Crunch, 01/2025)

- Security Council Debates Use of Artificial Intelligence in Conflicts, Hears Calls for UN Framework to Avoid Fragmented Governance (United Nations Press, 12/2024)

- How Mark Zuckerberg has fully rebuilt Meta around Llama (Sharon Goldman, Fortune, 11/2024)

- ‘Open-source will win’: Meta’s Yann LeCun takes aim at competitors’ closed AI models (Business Today India, 10/2024)

- Future of AI will be like waking with three smart people working for you (The Times of India, 10/2024)

- This AI Pioneer Thinks AI Is Dumber Than a Cat (Wall Street Journal, 10/2024)

- AI safety showdown: Yann LeCun slams California’s SB 1047 as Geoffrey Hinton backs new regulations (VentureBeat, 08/2024)

- Yann Le Cun (Meta), rock star discrète de la tech et de l’IA (Challenges, 06/2024)

- Meta AI chief LeCun slams Elon Musk over ‘blatantly false’ predictions, spreading conspiracy theories (CNBC, 06/2024)

- 'New renaissance': Meta and Amazon bosses make the case for AI optimism (CNN, 06/2024)

- Yann Le Cun, le Frenchie qui tient tête à Elon Musk (Le Point, 06/2024)

- What is science? Tech heavyweights brawl over definition (Nature, 05/2024)

- AI pioneer LeCun to next-gen AI builders: Don't focus on LLMs (VentureBeat, 05/2024)

- social media feud highlights key differences in approach to AI research and hype (VentureBeat, 05/2024)

- Elon Musk Is Feuding With ‘AI Godfather’ Yann LeCun (Again)—Here's Why (Forbes, 05/2024)

- Meta AI chief says large language models will not reach human intelligence. Yann LeCun argues current AI methods are flawed as he pushes for ‘world modelling’ vision for superintelligence (Financial Times, 05/2024)

- Yann Le Cun, Méta nous présente JEPA, le futur de l'intelligence artificielle (Le Point, 04/2024)

- TIME 100 Impact Award: Yann Lecun Is Optimistic That AI Will Lead to a Better World (TIME, 02/2024)

- Meta’s AI Chief Yann LeCun on AGI, Open-Source, and AI Risk (TIME, 02/2024)

- Yann LeCun, chief AI scientist at Meta: Human-level artificial intelligence is going to take a long time (El PAis, 01/2024)

- How Not to Be Stupid About AI, With Yann LeCun (Wired Magazine, 12/2023)

- Inside the A.I. Arms Race That Changed Silicon Valley Forever (New York Times, 12/2023)

- AI pioneers Yann LeCun and Yoshua Bengio clash in an intense online debate over AI safety and governance (Venture Beat, 10/2023)

- In a Rare Outburst, Meta’s LeCun Blasts OpenAI, Turing Awardees. Meta Chief AI Scientist Yann LeCun is blasting fellow AI luminaries for overselling AI's existential threat and asking for regulation (Ai Business, 10/2023)

- Why Meta’s Yann LeCun isn’t buying the AI doomer narrative (FastCompany, 05/2023)

- In Battle Over A.I., Meta Decides to Give Away Its Crown Jewels (New York Times, 05/2023)

- Yuval Noah Harari (Sapiens) versus Yann Le Cun (Meta) on artificial intelligence (Le Point, 05/2023)

- Yann Le Cun, directeur à Meta : « L’idée même de vouloir ralentir la recherche sur l’IA s’apparente à un nouvel obscurantisme » (Le Monde, 04/2023)

- Yann Le Cun, director at Meta: 'The very idea of wanting to slow down AI research is akin to a new form of obscurantism' (Le Monde 04/2023)

- Yann LeCun’s vision for creating autonomous machines (VentureBeat, 08/2022)

- Yann LeCun has a bold new vision for the future of AI (MIT Tech Review 06/2022)

- Yann LeCun: AI Doesn’t Need Our Supervision. Meta’s AI chief says self-supervised learning can build the metaverse and maybe even human-level AI (IEEE Spectrum 02/2022)

- Yann Le Cun : « Les applications bénéfiques de l’intelligence artificielle vont, de loin, l’emporter » (Le Monde 02/2020)

- Yann LeCun, lauréat du prix Turing : « L’IA continue de faire des progrès fulgurants » (Le Monde 03/2019)

- Turing Award Won by 3 Pioneers in Artificial Intelligence (New York Times 03/2019)

- Un Français lauréat du prix Turing, avec deux autres pionniers de l'IA (Le Point, 03/2019)

- Inside Facebook's fight to beat Google and dominate in AI (Wired 11/2018)

- Dehaene et Le Cun, le choc des cerveaux: Entretien avec le neuroscientifique Stanislas Dehaene et le chercheur Yann Le Cun, auteurs de « La Plus Belle histoire de l'intelligence » (Robert Laffont) (Le Point, 11/2018)

- Facebook AI chief Yann LeCun is stepping aside to take on dedicated research role (The Verge, 01/2018)

- Yann LeCun : « Aujourd'hui, Facebook est entièrement construit autour de l'intelligence artificielle » (Le Monde, 10/2017)

- Yann LeCun s’explique sur les travaux de Facebook sur l’intelligence artificielle (Le Monde, 10/2017)

- Yann Le Cun, le Monsieur Intelligence artificielle de Facebook. Le Français Yann Le Cun dirige depuis quatre ans le laboratoire d'IA du réseau social, à New York. Profession : apprendre aux machines à réfléchir. (Le Point, 03/2017)

- Yann Le Cun : "On ne peut pas séparer les notions d'intelligence et d'apprentissage" (Le Point, 02/2016)

- La leçon d’un maître de l’intelligence artificielle au Collège de France (Le Monde, 02/2016)

- Portrait: Yann LeCun, le temps des machines (Libération, 09/2015)

- Intelligent Machines: What does Facebook want with AI? (BBC, 09/2015)

- Teaching Machines to Understand Us (MIT Tech Review, 08/2015)

- Welcome to the AI Conspiracy: The 'Canadian Mafia' Behind Tech's Latest Craze (Vox, 07/2015)

- Facebook Opens a Paris Lab as AI Research Goes Global (Wired, 06/2015)

- Yann LeCun, l’intelligence en réseaux. Adulé dans sa spécialité, le « deep learning », ce chercheur recruté par Facebook dirige le laboratoire d’intelligence artificielle créé à Paris par le réseau social. (Le Monde, 06/2015)

- Yann LeCun : « L’intelligence artificielle reste un défi scientifique » (Les Echos, 06/2015)

- Facebook ouvre un laboratoire d’intelligence artificielle à Paris (Le Monde, 06/2015)

- Yann LeCun, l’intelligence en réseaux. Adulé dans sa spécialité, le « deep learning », ce chercheur recruté par Facebook dirige le laboratoire d’intelligence artificielle créé à Paris par le réseau social. (Le Monde, 06/2015)

- 'Deep Learning' Will Soon Give Us Super-Smart Robots (Wired, 05/2015)

- Facebook's Quest to Build an Artificial Brain Depends on This Guy (Wired 08/2014)

- Facebook's 'Deep Learning' Guru Reveals the Future of AI (Wired 12/2013)

- Facebook Taps 'Deep Learning' Giant for New AI Lab (Wired 12/2013)

- Eye robot (The Economist, 10/2010)

| Older Content |

[stuff below this line is badly out of date]

| Quick Links |

- Center for Data Science, and the NYU Data Science Portal.

- Computational and Biological Learning Lab, my research group at the Courant Institute, NYU.

- CILVR Lab: Computational Intelligence, Vision Robotics Lab: a lab with many NYU faculty, students and postdocs working on AI, ML and applications thereof such as computer Vision, NLP, robotics, and healthcare.

- Research: descriptions of my projects and contributions, past and present.

- Publications: (almost) all of my publications, available in PDF and DjVu formats.

- Google Scholar Profile: all my publications with number of citations, harvested by Google.

- Preprints on ArXiv.org: where you will find our latest results, before they may receive a stamp of approval.

| Computational and Biological Learning Lab |

| My lab at the Courant Institute of New york University is called the Computational and Biological Learning Lab. |  |

See research projects descriptions, lab member pages, events, demos, datasets...

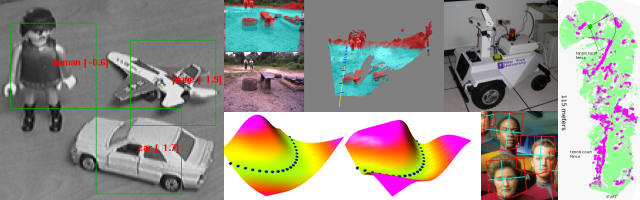

We are working on a class of learning systems called Energy-Based Models, and Deep Belief Networks. We are also working on convolutional nets for visual recognition , and a type of graphical models known as factor graphs.

We have projects in computer vision, object detection, object recognition, mobile robotics, bio-informatics, biological image analysis, medical signal processing, signal processing, and financial prediction,....

| Teaching |

Jump to my course page at NYU, and see course descriptions, slides, course material...

| Talks and Tutorials |

See, watch and hear talks and tutorial.

| Deep Learning |

Animals and humans can learn to see, perceive, act, and communicate with an efficiency that no Machine Learning method can approach. The brains of humans and animals are "deep", in the sense that each action is the result of a long chain of synaptic communications (many layers of processing). We are currently researching efficient learning algorithms for such "deep architectures". We are currently concentrating on unsupervised learning algorithms that can be used to produce deep hierarchies of features for visual recognition. We surmise that understanding deep learning will not only enable us to build more intelligent machines, but will also help us understand human intelligence and the mechanisms of human learning.

| Relational Regression |

We are developing a new type of relational graphical models that can be applied to "structured regression problem". A prime example of structured regression problem is the prediction of house prices. The price of a house depends not only on the characteristics of the house, but also of the prices of similar houses in the neighborhood, or perhaps on hidden features of the neighborhood that influence them. Our relational regression model infers a hidden "desirability sruface" from which house prices are predicted.

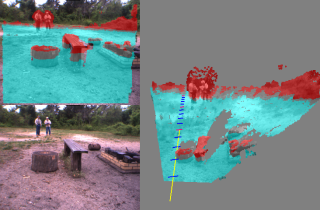

| Mobile Robotics |

|

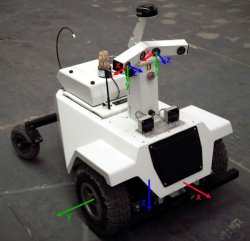

The purpose of the LAGR project,

funded by the US government, is to design vision and learning algorithms

to allow mobile robots to navigate in complex outdoors

environment solely from camera input.

My Lab, collaboration with Net-Scale Technologies is one of 8 participants in the program (Applied Perception Inc., Georgia Tech, JPL, NIST, NYU/Net-Scale, SRI, U. Penn, Stanford). Each LAGR team received identical copies of the LAGR robot, built be the CMU/NREC.

|

|

|

The government periodically runs competitions between the teams.

The software from each team is loaded and run by the goverment team

on their robot.

The robot is given the GPS coordinates of a goal to which it must drive as fast as possible. The terrain is unknown in advance. The robot is run three times through the test course. The software can use the knowledge acquired during the early runs to improve the performance on the latter runs.

|

|

CLICK HERE FOR MORE INFORMATION, VIDEOS, PICTURES >>>>>.

Prior to the LAGR project, we worked on the DAVE project, an attempt to train a small mobile robot to drive autonomously in off-road environments by looking over the shoulder of a human operator.

CLICK HERE FOR INFORMATION ON THE DAVE PROJECT >>>>>.

| Energy-Based Models |

|

Energy-Based Models (EBMs) capture dependencies between variables by

associating a scalar energy to each configuration of the

variables. Inference consists in clamping the value of observed

variables and finding configurations of the remaining variables that

minimize the energy. Learning consists in finding an energy

function in which observed configurations of the variables are given

lower energies than unobserved ones. The EBM approach provides a

common theoretical framework for many learning models, including

traditional discriminative and generative approaches, as well as

graph-transformer networks, conditional random fields, maximum margin

Markov networks, and several manifold learning methods.

Probabilistic models must be properly normalized, which sometimes requires evaluating intractable integrals over the space of all possible variable configurations. Since EBMs have no requirement for proper normalization, this problem is naturally circumvented. EBMs can be viewed as a form of non-probabilistic factor graphs, and they provide considerably more flexibility in the design of architectures and training criteria than probabilistic approaches. |

|

CLICK HERE FOR MORE INFORMATION, PICTURES, PAPERS >>>>>.

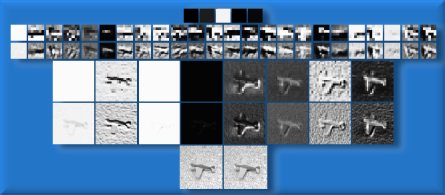

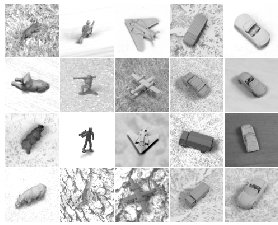

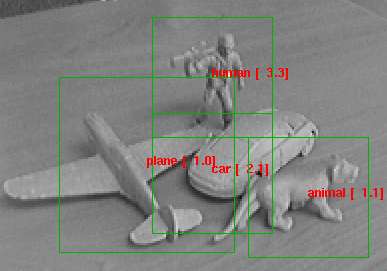

| Invariant Object Recognition |

|

|

|

The recognition of generic object categories with invariance to pose,

lighting, diverse backgrounds, and the presence of clutter is one of

the major challenges of Computer Vision.

I am developing learning systems that can recognize generic object purely from their shape, independently of pose and lighting. See The NORB dataset for generic object recognition is available for download. |

|

CLICK HERE FOR MORE INFORMATION, PICTURES, PAPERS >>>>>.

| Lush: A Programming Language for Research |

Lush combines three languages in one: a very simple to use, loosely-typed interpreted language, a strongly-typed compiled language with the same syntax, and the C language, which can be freely mixed with the other languages within a single source file, and even within a single function.

Lush has a library of over 14,000 functions and classes,

some of which are simple interfaces to popular libraries:

vector/matrix/tensor algebra, linear algebra (LAPACK, BLAS),

numerical function (GSL), 2D and 3D graphics (X, SDL, OpenGL,

OpenRM, PostScipt), image processing, computer vision (OpenCV),

machine learning (gblearning, Torch), regular expressions,

audio processing (ALSA), and video grabbing (Video4linux).

Lush has a library of over 14,000 functions and classes,

some of which are simple interfaces to popular libraries:

vector/matrix/tensor algebra, linear algebra (LAPACK, BLAS),

numerical function (GSL), 2D and 3D graphics (X, SDL, OpenGL,

OpenRM, PostScipt), image processing, computer vision (OpenCV),

machine learning (gblearning, Torch), regular expressions,

audio processing (ALSA), and video grabbing (Video4linux).

If you do research and development in signal processing, image processing, machine learning, computer vision, bio-informatics, data mining, statistics, or artificial intelligence, and feel limited by Matlab and other existing tools, Lush is for you. If you want a simple environment to experiment with graphics, video, and sound, Lush is for you. Lush is Free Software (GPL) and runs under GNU/Linux, Solaris, and Irix.

| DjVu: The Document Format for Digital Libraries |

![]()

My main research topic until I left AT&T was the

DjVu project.

DjVu is a document format, a set of compression methods and a software

platform for distributing scanned and digitally produced documents on the Web.

DjVu image files of scanned documents are typically 3-8 times

smaller than PDF or TIFF-groupIV for bitonal and 5-10 times

smaller than PDF or JPEG for color (at 300 DPI). DjVu versions

of digitally produced documents are more compact and render

much faster than the PDF or PostScript versions.

My main research topic until I left AT&T was the

DjVu project.

DjVu is a document format, a set of compression methods and a software

platform for distributing scanned and digitally produced documents on the Web.

DjVu image files of scanned documents are typically 3-8 times

smaller than PDF or TIFF-groupIV for bitonal and 5-10 times

smaller than PDF or JPEG for color (at 300 DPI). DjVu versions

of digitally produced documents are more compact and render

much faster than the PDF or PostScript versions.

Hundreds of websites around the world are using DjVu for Web-based and CDROM-based document repositories and digital libraries.

- Yann's DjVu page: a description of DjVu, and a set of useful links.

- Technical talk on DjVu: watch a streaming video of Yann's Distinguished Lecture at the University of Illinois at Urbana-Champaign, October 22 2001. (100K Windows Streaming Media). (56K Windows Streaming Media),

- DjVuZone.org: samples, demos, technical information, papers, and tutorials on DjVu.... DjVuZone hosts several digital libraries, including NIPS Online.

- DjVuLibre for Unix: free/open-source browser plug-ins, viewers, utilites, and libraries for Unix.

- Commercial DjVu Software: free plug-ins for Windows and Mac, free and commercial applications for Windows and some Unix platforms (hosted at LizardTech, the company that distributes and supports DjVu under license from AT&T).

- Any2DjVu and Bib2Web: Upload your documents and get them converted to DjVu. Bib2Web automates the creation of publication pages for researchers.

| Learning and Visual Perception |

My main research interest is machine learning, particularly how it applies

to perception, and more particularly to visual perception.

My main research interest is machine learning, particularly how it applies

to perception, and more particularly to visual perception.

I am currently working on two architectures for gradient-based perceptual learning: graph transformer networks and convolutional networks.

Convolutional Nets are a special kind of neural net architecture designed to recognize images directly from pixel data. Convolutional Nets can be trained to detect, segment and recognize objects with excellent robustness to noise, and variations of position, scale, angle, and shape.

Have a look at the animated demonstrations of LeNet-5, a Convolutional Nets trained to recognize handwritten digit strings.

Convolutional nets and graph transformer networks are embedded in several high speed scanners used by banks to read checks. A system I helped develop reads an estimated 10 percent of all the checks written in the US.

Check out this page, and/or read this paper to learn more about Convolutional Nets and graph transformer networks.

| MNIST Handwritten Digit Database |

The MNIST database contains 60,000 training samples and 10,000 test samples of size-normalized handwritten digits. This database was derived from the original NIST databases.

MNIST is widely used by researchers as a benchmark for testing pattern recognition methods, and by students for class projects in pattern recognition, machine learning, and statistics.

| Music and Hobbies |

I have several interests beside my family (my wife and three sons) and my research:

- Playing Music: particularly Jazz, Renaissance and Baroque music. A few MP3 and MIDI files of Renaissance music are available here.

- Building and flying miniature flying contraptions:

preferably battery powered, radio controled, and unconventional in their design.

- Building robots: particularly Lego robots (before the days of the Lego Mindstorms)

- Hacking various computing equipment: I have owned 5 computers between 1978 and 1992: SYM-1, OSI C2-4P, Commodore 64, Amiga 1000, Amiga 4000. then I lost interest in personal computing when the only thing you could get was a boring Wintel box. Then, Linux appeared and I came back to life.....

- Sailing: I own two sport catamarans, a Nacra 5.8 and a Prindle 19. I also sail and race larger boats with friends.

- Graphic Design: I designed the DjVu logo and much of the AT&T DjVu web site.

- Reading European comics. Comics in certain European countries (France, Belgium, Italy, Spain) are considered a true art form ("le 8-ieme art"), and not just a business with products targeted at teenagers like on this side of the pond. Although I don't have a shred of evidence to support it, I claim to have the largest private collection of French-language comics in the Eastern US.

- making bad puns in French, but I don't have much of an audience this side of the pond.

- Sipping wine, particularly red, particularly French, particularly Bordeaux, particularly Saint-Julien.

| Bib2Web: Automatic Creation of Publication Pages |

| Photos Galleries |

- Photos taken at various conferences, workshops, trade shows and other professional events. Includes pictures from CVPR, NIPS, Learning@Snowbird, ICDAR, CIFED, etc.

- A photo and movie gallery of various radio-controled airplanes, other miniature flying objects, lego robots, and other techno toys. Check out also my model airplane page.

- Miscellaneous artsy and nature picture, including garden-variety wild animals, landscapes, etc.

- Vintage airplanes at the national air and space museum in Le Bourget, near Paris.

| Fun Stuff |

- No, Yann is NOT Philippe Kahn's evil brother

- Your Name can't possibly be pronounced that way: or how a Nobel prize winner tried to tell me how to pronounce my own name.

- Who is Tex Avery anyway?

- Steep Learning Curves and other erroneous metaphores

- Vladimir Vapnik meets the video game sub-culture

- Cheap Philosophy (42 cents)

- A Mathematical Theory of Empty Disclaimers

- The Axis of Rivals

| Previous Life |

My former group at AT&T (the Image Processing Research Department) and its ancestor (Larry Jackel's Adaptive Systems Research Department) made numerous contributions to Machine Learning, Image Compression, Pattern Recognition, Synthetic Persons (talking heads), and Neural-Net Hardware. Specific contributions not mentioned elsewhere on this site include the ever so popular Support Vector Machine, the PlayMail and Virt2Elle synthetic talking heads, the Net32K and ANNA neural net chips, and many others. Visit my former group's home page for more details.

| Links |

Links to interesting places on the web, friends' home pages, etc .